The conceptual framework of data pipelines help us to better organize and execute everyday data engineering tasks.Ī node is a step in a data pipeline process. Tasks operating on partitioned data my be more easy parallelized.Ī DAG is a collection of nodes and edges that describe the order of operations for a data pipeline. As smaller datasets, time periods and related concepts are easier to debug than big amounts of data and unrelated concepts. This will lead to faster and more reliable pipelines. This is the process of isolating data to be analyzed by one or more attributes, such as time - schedule partitioning, conceptually related data into discrete groups - logical partitioning, data size - size partitioning or location. What is the frequency on related datasets? A rule of thumb is that the frequency of a pipeline's schedule should be determined by the dataset in our pipeline, that requires the most frequent analysis.How frequently is data arriving and how often do we need to perform analysis? If the company needs data on a daily basis, that is the driving factor in determining the schedule.What is the average size of the data for a time period? The more data we have, the more often the pipeline needs to be scheduled.If we answer the below questions, we can find an appropriate schedule for our pipelines. For example we only would need the aggregation of the current month and add it to the existing totals instead of aggregating data of all times. With the help of schedules we also can leverage already completed work. If we use schedules appropriately, they are also a form of data partitioning, which can increase the speed of our pipeline runs. Schedules improve data quality by limiting our analysis to relevant data to a time period.

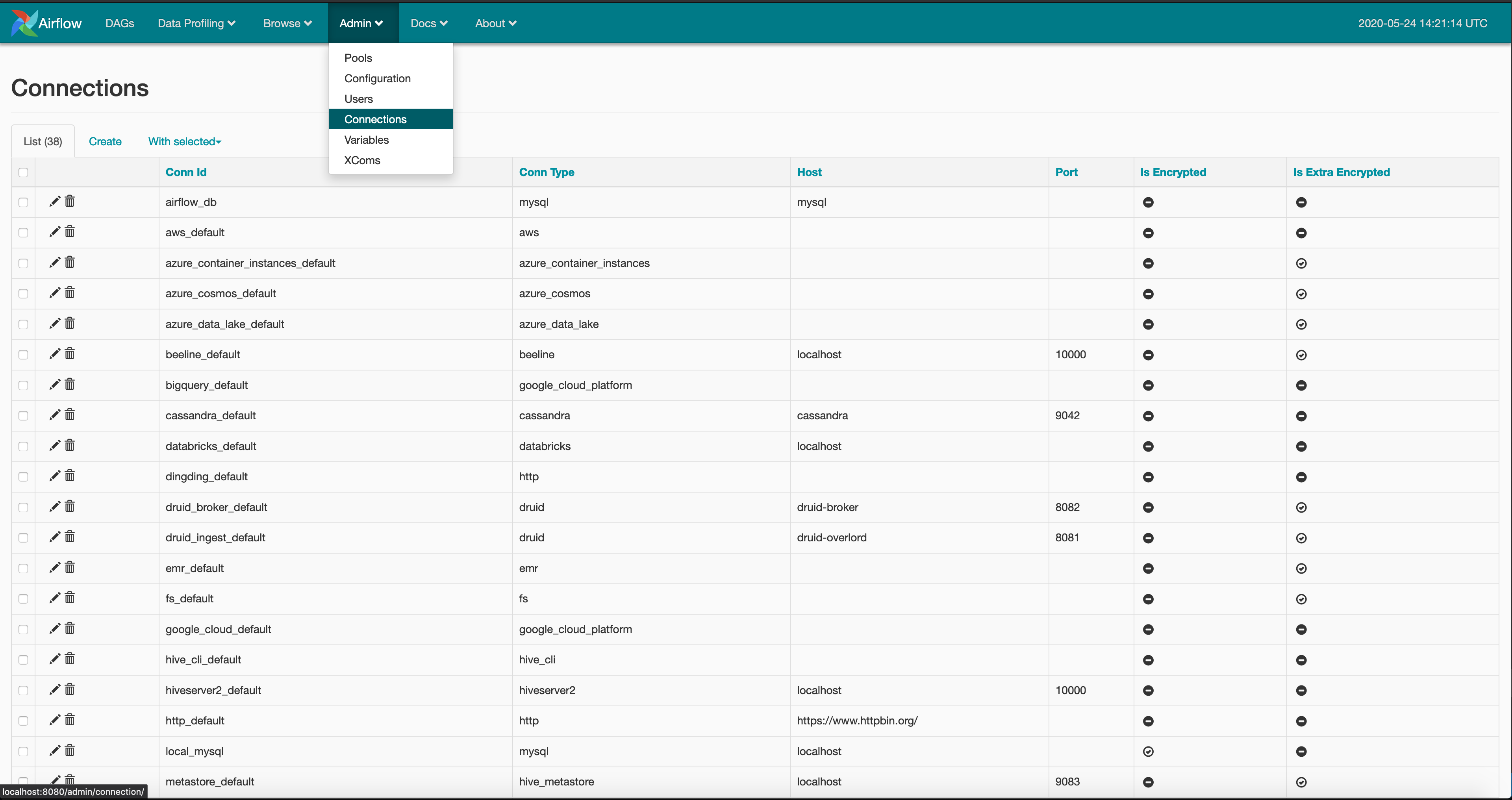

The scope of a pipeline run can be defined as the time of the current execution until the end of the last execution. The components can be used to track data lineage: the rendered code tab for a task, the graph view for a DAG, historical runs under the tree view.Īllow us to make assumption about the scope of the data. If each step of the data movement and transformation process is well described, it's easy to find problems if they occur.Īirflow DAGs are a natural representation for the movement and transformation of data. If we can surface data lineage, everyone in the company is able to agree on the definition of how a particular metric is calculated. If we can describe the data lineage of a dataset or analysis is building confidence in our data consumers like Engineers, Analyst, Data Scientists, Stakeholders.Įlse if the data lineage is unclear it is very likely that our data consumers do not trust or want to use the data. It is important for the following points: Of a dataset describes the discrete steps involved in the creation, movement and calculation of a dataset. Ensuring the quality of your data through automated validation checks is a critical step when working with data. Is the process of ensuring that data is present, correct and meaningful.

Data must not contain any sensitive information.Pipelines must run on a particular schedule.Data must arrive within a given timeframe fro the start of the execution.Data must be accurate to some margin of error.There can be different requirements how to measure data quality based on the use case. The conceptual framework of data pipelines will help you better organize and execute everyday data engineering tasks.Įxamples of use cases are automate marketing emails, real time pricing or targeted advertising based on the browsing history. It gives a general overview about data pipelines and provides also the core concepts of Airflow and some links to code examples on github.Ī data pipeline is a series of steps in which data is processed, mostly ETL or ELT.ĭata pipelines provide a set of logical guidelines and a common set of terminology. In the following lines I am doing a write-up about everything I learned about data pipelines at the Udacity online class.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed